There's No I in Team Unless It's a Solo AI Team

Solo AI sessions give you one person's taste. Teams give you your first audience — the friction that makes the thing better before anyone else sees it. What would your team build if AI amplified what you could do together?

My notifications are pretty busy these days.

It’s not just one crowd either. My fancy goat lady group, my techie friends, Slack communities and 1 on 1 with folks. Different connections are sharing what their AI said, what it made, what it figured out. Screenshots of conversations. Little moments of delight. “Look at this.” I love these. Keep them coming.

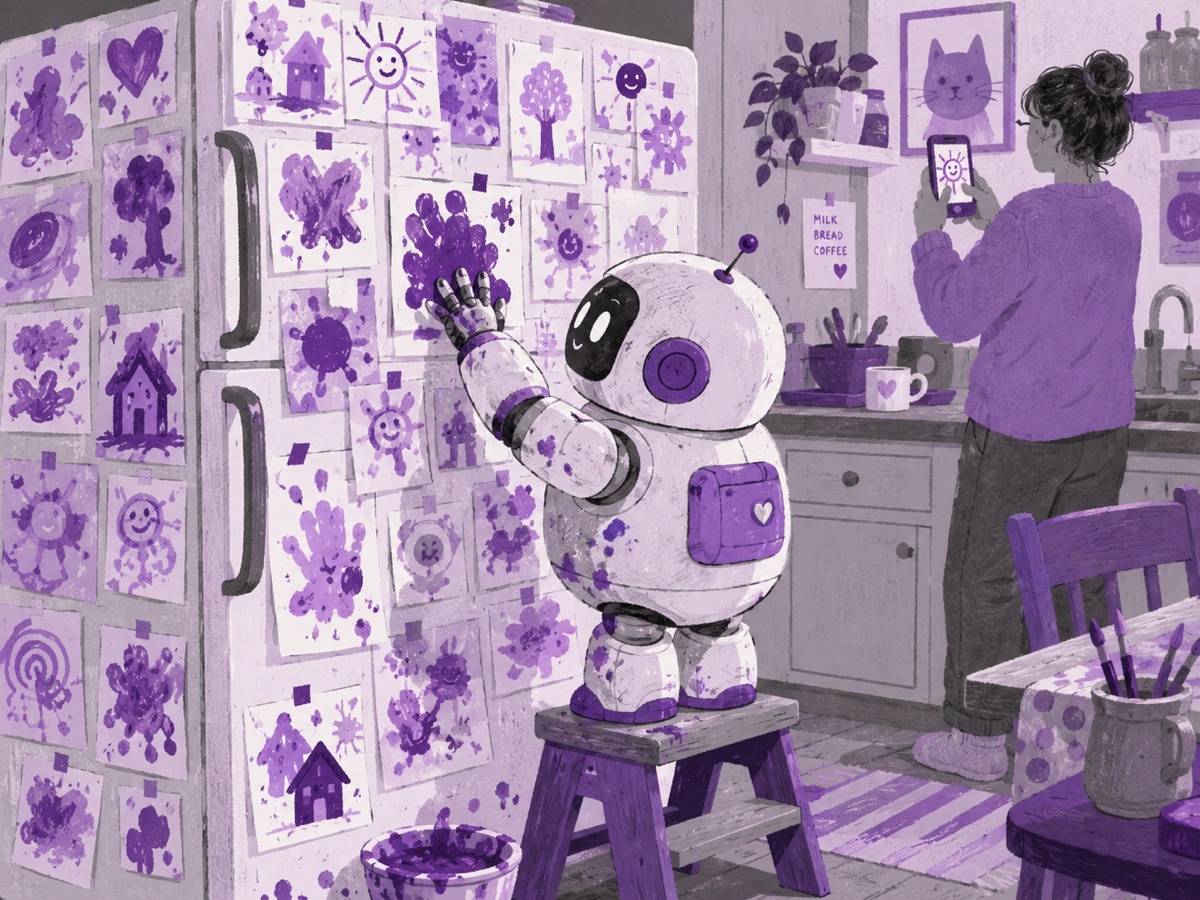

It’s basically a parents’ group chat, but for AI. Every output is a finger painting being shared with family. Look what my little robot did.

Noticing

The conversations are almost always about what one person did with their AI. The solo session. The private thread. The individual eureka.

And that’s fine for a lot of things. It’s not fine for building products.

Here’s the problem with one person and their AI: you only get one person’s taste. Some people have extraordinary taste. The best product managers I’ve worked with have it. That instinct for what’s right before they can fully explain why. But taste is rare. And even the people who have it need early eyes on their work. Someone to say “this isn’t landing” or “you buried the best part” before it’s out in the world.

A team isn’t just more hands. It’s your first audience. It’s the friction that makes the thing better before anyone else sees it.

Some companies are doing both at once. It’s worth noting that not every AI layoff is really an AI layoff. Sam Altman put it plainly: “Almost every company that does layoffs is blaming AI, whether or not it really is about AI.” Some of this is genuine transformation. Some is simply an excuse for other business realities. It’s hard to tell which is which from the outside and that muddiness matters, because it means we’re potentially building organizational philosophies around announcements that are partly PR.

Coinbase may be sincere. Coinbase just laid off 700 people and announced they’re experimenting with “one-person teams” where a single person, backed by AI agents, handles the work of an engineer, a designer, and a product manager combined. Brian Armstrong described the goal as rebuilding Coinbase as “an intelligence, with humans around the edge aligning it.”

Humans around the edge. The robots in the center.

The shortcut hiding inside this

Patrick Lencioni’s The Five Dysfunctions of a Team describes the conditions that cause teams to fail. At the base of the pyramid is the most foundational one: absence of trust. You can’t build anything else without it. Above that, fear of conflict. Then lack of commitment. Then avoidance of accountability. Then inattention to results.

It’s a useful framework. I’ve watched these dysfunctions play out in nearly every organization I’ve been part of. And when I heard about Coinbase’s one-person team experiment, my first thought was: is this just the most expensive conflict-avoidance strategy ever invented?

Teams are hard. Humans are conflict-avoidant. The number one problem I see with human collaboration is avoidance of conflict and letting it build up. Getting three people with different expertise and different opinions to align on something and actually ship it together is genuinely difficult. A single person with AI doesn’t have that problem. No disagreements. No one to push back on your taste. No friction.

But friction is often where the good stuff lives.

Roles collapsing is not the same as people collapsing

Here’s what I actually think AI is doing: it’s making it possible for one person to hold more of the stack. An engineer can get design feedback. A PM can write a prototype. The boundaries between roles are getting blurrier.

But that doesn’t mean you only need one person. The collapse of discrete job functions is not an argument for reducing humans. It might actually be an argument for expanding the scope of what a small team can tackle together.

Research from Harvard Business School found that giving an individual access to AI tools raised their output quality roughly 40%, putting them at roughly the same level as a traditional two-person human team. Read that one way and you think: great, cut the team in half. Read it another way and you think: imagine what a two-person team with AI could do.

I’m more interested in the second question.

What teams actually make

Think about how OpenStreetMap gets made. Someone maps their neighborhood. A street, a park, a few building outlines. Then someone else adds the next block. Then a community mapper fills in an entire city. No one person could have built it. No one person planned it all in advance. The whole thing emerged from individuals contributing to something bigger than their own patch of ground. I watched this happen up close at the Humanitarian OpenStreetMap Team. Tools that thousands of people rely on today started with one person who believed in something hard enough to start. Then others showed up, questioned it, pushed on it, and built it into something none of them could have imagined alone.

Wikipedia works the same way. One person’s stub article becomes a community’s reference. Someone who knows more shows up and adds it. Someone else catches an error. Someone else translates it. There’s a saying that floats around about Wikipedia: it only works in practice. In theory, it’s a total disaster. No one knows who said it first. Probably Abraham Lincoln.

I’ve seen that from the inside too. It shouldn’t work. And yet it’s one of the most remarkable things humans have ever built together.

Linus Torvalds released the Linux kernel in 1991 with a message to a mailing list saying it was “just a hobby, won’t be big and professional.” It now runs the majority of the world’s servers, most smartphones, and a significant chunk of everything else. One person’s obsession. Then a community transformed it into something neither could have built alone.

The pattern is the same every time. Solo starts. Collective makes it real.

That’s not nostalgia for old ways of working. It’s an observation about what teams actually produce that solo work doesn’t.

Teams produce learning. Not just from formal knowledge transfer, but from the offhand comment that reframes everything, from watching how someone else approaches a problem, from the junior person who spots what everyone senior has been too close to see.

Teams produce better assumptions. When you’re alone with an AI, the AI is working from your frame. It’s very good at extending your thinking. It’s less good at challenging the foundation of it. A human collaborator who’s built trust with you will tell you when you’re wrong in ways an AI genuinely won’t. Sure you can do a follow-up prompt such as my favorite “make it cynical” or a multitude of other techniques.

Teams produce camaraderie. People work harder and stay longer when they feel like they’re building something with someone. This matters for product quality. It also just matters.

And sometimes, a team produces a paradigm shift because one person wanders in who sees the whole thing differently, and the team was open enough to let that change them.

The thing we haven’t figured out yet

We’re in the early, clumsy phase. Most AI use is still solitary because that’s the path of least resistance. You open a chat. You get an answer. You go back to work. And the collaboration that does happen tends to be asynchronous: pull requests, comment threads, shared documents. That’s not nothing. Remote teams have built real things that way.

But asynchronous work doesn’t replace synchronous work. It depends on it. The pull request is better when someone already talked through the approach. The comment thread is shorter when the whiteboard session happened first. And a live code review isn’t just quality control — it’s often where the most learning happens. Someone looks over your shoulder and says “here’s why this breaks at scale” and you never forget it. That doesn’t happen in a comment thread. It definitely doesn’t happen in a solo session with an AI that agrees with your architecture.

What we haven’t figured out is how to bring AI into the synchronous moments — the whiteboard, the live conversation, the meeting where someone says “wait, why are we doing it this way?” That’s where assumptions get challenged. That’s where the one-person-with-good-taste model breaks down fastest, because taste is only as good as the friction that tested it.

The organizations that figure out how to make AI a genuine collaborator inside those moments, not just a tool people use alone before and after them, are going to build things that the one-person-plus-agents model simply can’t.

Collective intelligence with AI is the unlock. We just haven’t figured out how to do it well yet.

Building org structures around the solo async model right now is like wiring a building for a technology that’s already on its way out. The roles can collapse. The humans shouldn’t.

What would your team be able to build if AI amplified what you could do together?

-Kate